Welcome to A People's Atlas of Nuclear Colorado

Navigating the Atlas

Using the buttons on the left, you may also browse the Atlas's artworks and scholarly essays, access geolocated material on a map, and learn more about contributors to the project.

If you would like to contribute materials to the Atlas, please reach out to the editors: Sarah Kanouse (s.kanouse at northeastern.edu) and Shiloh Krupar (srk34 at georgetown.edu).

Cover Image by Shanna Merola, "An Invisible Yet Highly Energetic Form of Light," from Nuclear Winter.

Atlas design by Byse.

Funded by grants from Georgetown University and Northeastern University. Initial release September 2021.

Essay

Although cloaked in secrecy at the time, Colorado’s role in powering the U.S. nuclear complex is now abundantly evident due to extensive and ongoing cleanup efforts. Still largely concealed is the state’s historic exposure to contamination from radioactive fallout, with Colorado falling squarely in the band of heaviest global fallout during the era of atmospheric testing at the Nevada Test Site from 1951 to 1962.

During the atmospheric testing period, radioactive fallout was publicly portrayed as an insignificant, if pesky by-product of using nuclear weapons. However, a secret policy debate involving the Atomic Energy Commission (AEC) and the Department of Defense ended with the recognition that fallout’s inevitable cumulative threat serves as a potent if unacknowledged constraint on actual use of nuclear weapons. AEC scientists recommended against locating a nuclear test site in Nevada unless forced by a wartime emergency. While technically a “police action” and not a war, the Korean Conflict (1950-1953) allowed the Department of Defense to pressure the AEC to proceed with testing at the Nevada Test Site, which was upwind of most of the nation’s population.

Although touted as a “safe” practice, atmospheric testing lasted only fourteen years from the time the first Soviet nuclear test set the arms race into overdrive. Despite the otherwise confrontational tone of the Cold War at its height, the issue of fallout from atmospheric testing was so significant that the Soviet Union and the United States quickly agreed to end the practice with the 1963 Limited Test Ban Treaty.

Richard L. Miller, Under the Cloud: The Decades of Nuclear Testing (New York: The Free Press, 1986).Richard L. Miller’s 1986 account of the atmospheric testing era provides a starting point for understanding the full reach of fallout across the United States. In his exhaustive, multiple volume compilation Under the Cloud, Miller was able to rank U.S. counties according to fallout exposure by tracing contamination and documenting plumes that tracked back to specific shots, or tests, in Nevada. Despite his extensive efforts to declassify U.S. testing documents, Miller was able to access neither fallout data from the American test sites in the Pacific nor global fallout from testing by other nations, primarily the USSR and the United Kingdom. His report thus excluded all U.S. high yield testing, which took place entirely in the Pacific, as well as Soviet atmospheric tests with an even greater total yield.

Despite his breakthrough in prodding the government to publicly release quantifiable information on fallout, Miller’s findings should be interpreted with caution. He identified the source of most of his data as the “Weather Bureau,” whose “Special Projects Section” directed Air Force fallout sampling aircraft to collect Soviet bomb debris. The Weather Bureau operated a network of relatively ineffective ground collection stations. However, the organization had no airborne assets that could have been used to produce the data in Miller’s charts; this information originated from the Air Force, which was the only organization with the assets to collect such data. The Miller data set is not even complete for the Nevada Test Site, as further, more detailed levels of data remained classified. The Weather Bureau’s release of this information thus served as cover for a selected and sanitized data set while concealing both the source of the released data and the existence of more complete records. Despite calls in 2001 by the Centers for Disease Control and the National Cancer Institute for the release of the relevant records to better assess the risk posed by past fallout depositions, the Department of Defense insists its more than half-century old claims for secrecy about fallout are necessary to protect national security.

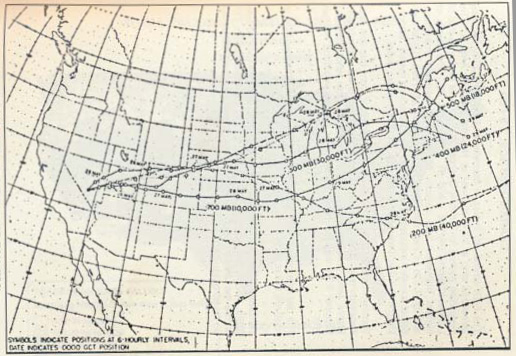

Richard Miller, Fallout map, Operation Tumbler-Snapper, May 1952, 1986, The U.S. Atlas of Nuclear Fallout

This map illustrates how Colorado’s location downwind from the Nevada Test Site exposed it to fallout carried on the prevailing circulation patterns that generally ran west to east. The tracks here represent the linked locations of detected and sampled plumes at regular time intervals arising from a single shot. While the map graphic suggests narrow and focused plumes, in practice they were more diffuse, with hazy, feathered edges as the plume mixed with the air mass it traveled through. Their width varied greatly, as did the mix of radiation present at different altitudes. In this case, fallout was monitored at four altitudes ranging from 10,000 to 40,000 feet. Deposition onto the surface under these tracks varied from undetectable to intense. The greatest factor in plumes depositing fallout on the ground were “rainouts,” where the plume happened to pass through or under rain events that washed fallout from the plume. Colorado’s highest elevations received greater amounts of fallout as the 10,000-foot-high plume collided with the Rocky Mountains.

Stratospheric circulation patterns in the atmosphere prevented fallout from dispersing evenly over the surface of the planet after it “cooled” on its way to earth, as had originally been assumed. Instead, it is pulled from the North Pole and the Equator towards a band centered on 45 degrees North latitude, falling at a rate almost eight times what would be expected if it were evenly distributed over the earth. The ironic reality was that a nuclear war would return the most radiation to both heavily populated areas and to Colorado, the source of much of the uranium that powered the weapons complex. In the first shot among those in a National Cancer Institute study tracking fallout as it moved away from the Nevada Test Site, La Plata County (Durango) became the “first worst” county in the United States in terms of the total fallout deposition it underwent from RANGER BAKER 2 (February 2, 1951, 8 kilotons). Three of the twenty most fallout-contaminated counties in the United States are in Colorado, out of a national total of 3,093 jurisdictions surveyed.

Early in the Cold War, the AEC recognized that fallout presented a potential limit on the capacity to wage nuclear war. In 1949, the Project Gabriel study asked: how many U.S. nuclear weapons could detonate in the Soviet Union before they endangered Americans? The apparent answer, about 60 The power of a nuclear weapon is expressed by the amount of conventional explosive, or TNT, that would be needed to release the same amount of energy. A megaton is equal to one million tons of TNT, while a kiloton is equal to 1000 tons. Thus, a 60 megaton bomb is 3000 times more destructive than a 20 kiloton blast.megatons of total yield, seemed sufficient to the military when its weapons were in the 15 to 20-The power of a nuclear weapon is expressed by the amount of conventional explosive, or TNT, that would be needed to release the same amount of energy. A megaton is equal to one million tons of TNT, while a kiloton is equal to 1000 tons. Thus, a 60 megaton bomb is 3000 times more destructive than a 20 kiloton blast.kiloton range. However, once the Soviet Union tested its first nuclear device in late 1949, the Joint Chiefs of Staff sought to maintain nuclear primacy by rapidly developing thermonuclear weapons with exponentially higher yields. Truman ordered the thermonuclear project forward over the objections of the AEC’s General Advisory Committee, led by Robert Oppenheimer and other top scientists concerned over the global risks presented by such weapons. The first thermonuclear weapon was detonated in 1952 with a 10-megaton yield—500 times more powerful than the first atomic bomb tested at Trinity, New Mexico. In the years that followed, the Air Force acquired literally thousands of thermonuclear warheads whose total yield exceeded the 60-megaton threshold for supposedly “safe” levels of fallout many times over. Lowry Air Force Base outside of Denver housed one of the first ICBM installations, with the eighteen missiles holding warheads with a total 72 megatons of yield. If those missiles had ever been launched, the fallout from their destruction would surely return to haunt Colorado—and Lowry was but one of dozens of such bases.

The 60-megaton limit established by the Project Gabriel study was carefully hidden from the public and kept off-limits for discussion even among the military insiders testifying at the 1954 hearing that ultimately stripped Robert Oppenheimer of his security clearance. The trial’s focus on Oppenheimer’s loyalty distracted from the Air Force’s efforts to undermine his wide influence on behalf of nuclear restraint among scientists and the public in favor of Edward Teller’s narrow, but congenial thermonuclearism.

Air Force Magazine, Vol. 42, No. 12, December 1959, Air Force Association

Eventually, the Air Force circumspectly acknowledged that Oppenheimer’s call to publicly discuss the pragmatic dangers of nuclear war was precipitated by fallout. In the December 1959 edition of Air Force, the publication of the service’s booster organization, the Air Force Association, its editors reluctantly admitted that fallout from the very thermonuclear weapons that Oppenheimer’s General Advisory Committee warned against, which the service had nonetheless stubbornly developed over the past decade—including those already on order for Lowry—would make any war conducted with them largely pointless.

"Radioactivity, therefore, was the factor which made of the hydrogen bomb the first true area weapon of history. Subsequently, it became apparent that this very effectiveness…tends to make the weapon quite unmanageable and may prevent its utilization… Widespread, heavy fallout…would probably also “backfire” against friendly nations and cause heavy casualties among the very peoples, including one’s own, whom the military operations were designed to protect. Fallout may actually preclude success in war. The most basic objection to uncontrolled fallout, in fact, is that it would tend to render war unmanageable as a rational tool of policy and national security."

“The Clean Weapons Problem,” Air Force 42, no. 12 (December 1959): 36-37.—Air Force Association, 1959

Thus, in just five years, fallout went from being an intelligence source too sensitive to directly mention behind closed doors at Oppenheimer’s security hearing to being discreetly acknowledged by those closest to Air Force leadership as a limit on nuclear war, which demands accomplishing as much destruction in as short a time as possible. There could be nothing leisurely about the timing of nuclear explosions, only very limited attempts to limit fireball contact with the surface, and the grim possibility that tactics might be employed—such as sub-surface detonations against deeply buried missile silos—that would purposefully add to fallout’s global threat.

Nuclear weapons do not guarantee national security. On the contrary, tracking the fallout from the atmospheric testing era shows that nuclear war amounts to transnational planetary suicide. Fallout’s unavoidable and indiscriminate collateral damage ensures there is no rational policy outcome from nuclear war. What we now know is that fallout makes global-scale nuclear war an act of self-harm. Fallout gives everyone who lives under the planet’s sky a stake in reducing the threat posed by nuclear weapons, even those who remain entranced by their firepower. Likewise, while avoiding nuclear war remains a moral necessity for many, ultimately fallout is proof that peace in the nuclear era remains an existential necessity for us all.

Sources

Miller, Richard L. Under the Cloud: The Decades of Nuclear Testing. New York: The Free Press, 1986.

“The Clean Weapons Problem.” Air Force 42, no. 12 (December 1959): 36-37.